AI Wound Care Documentation Apps: Revolutionizing Nursing Workflows and Patient Outcomes

Nurses spend 19% to 35% of every shift on EHR documentation, and roughly 23% of a 12-hour shift in flowsheets alone. Wound documentation is the worst of it:

- Manual ruler-based measurement overestimates wound area by up to 40%.

- Inter-rater reliability across nurses is poor.

- The EHR was never designed to handle photos.

AI wound care documentation tools now have receipts. Tissue Analytics holds FDA Breakthrough Device status. Swift Medical claims documentation up to 79% faster than manual. Published smartphone-app studies hit ICC 0.97 to 1.00 across raters versus ruler 0.92 to 0.97. The pitch is real.

The post-launch reality is messier than the demos. EHR integration, workflow adoption, FDA classification, and image-specific compliance are where projects actually break, and most teams underestimate all four.

This post is for founders, PMs, and engineering leads building a wound app or evaluating one. Plain English on what works in 2026, what's still demo-ware, and what it takes to ship.

What do AI wound care documentation apps actually do well in 2026, and what don't they do yet?

They produce smartphone-based wound measurement more accurate than a ruler, automate tissue composition analysis from photos, and cut nursing documentation time once integrated into shift workflow. They don't autonomously stage pressure injuries or generate OASIS responses; CMS prohibits the latter, and the former still requires clinician judgment.

Key Takeaways:

- The validated tech is narrower than the marketing. Smartphone-based wound measurement and tissue composition analysis are clinically defensible today, with ICC 0.97 to 1.00 across raters. Autonomous staging, infection diagnosis, and AI-generated OASIS responses are not, and CMS prohibits the last one.

- Workflow integration and EHR sandbox timelines are where projects break. Most wound app rollouts fail because the workflow asks for one extra tap nurses don't have time for, and most EHR integration estimates miss by 2x to 3x. The AI capability isn't the bottleneck.

- Deal-closing ROI is retention and HAPI rate reduction, not charting-time headlines. A 30% to 50% reduction in nursing time on wound documentation pays back through retention math at $61,000 per RN departure. Cutting hospital-acquired pressure injury rates moves real dollars under HACRP, SNF VBP, and MIPS.

What AI-Powered Wound Care Documentation Apps Actually Do (And What They Don't Do Well Yet)

An AI-powered wound assessment app turns a smartphone camera into a measurement instrument and a tissue classifier. Snap a photo, get area, depth, tissue composition, and a structured chart entry. The frontier is well-validated for some tasks and demo-ware for others, and the line is sharper than vendors usually admit.

Computer Vision And Wound Measurement: Where The Tech Is Solid

Wound size measurement is the part where a digital wound care platform clearly outperforms a ruler. Smartphone-based computer vision wound analysis, paired with LiDAR depth sensing on iPhone Pro models (iPhone 12 and newer) and recent Pixel Pro hardware, produces real-time wound assessment at an inter-class correlation of 0.97 to 1.00 across raters. Ruler-based measurement comes in at 0.92 to 0.97 and overestimates wound area by up to 40%.

Some platforms use a calibration sticker (Swift Skin and Wound's HealX fiducial marker is the cleanest example); others use LiDAR or stereo vision for true 3D capture. The output is consistent: length, width, surface area, and depth, computed in seconds and pinned to the same anatomy across visits. Wound measurement accuracy at this level is enough for clinical decisions and reimbursement-relevant fields without the human variability that has plagued chart reviews.

Tissue Composition And Wound Bed Analysis

The next layer up is wound bed analysis. Convolutional neural networks segment the wound surface into granulation, epithelialization, slough, eschar, and necrotic tissue, then return percentages. SeeWound 2 combines LiDAR with tissue assessment algorithms to do the same job with depth, classifying tissue type and where it sits in a 3D reconstruction. Tissue viability assessment at this resolution is useful for tracking healing trajectory visit over visit, which is the actual clinical question most of the time.

Color and edge analysis on top can flag periwound erythema or sudden boundary changes. Treat these as triage, not an infection risk assessment that replaces clinical evaluation. The flag tells the nurse to look harder. It doesn't diagnose.

Staging Assistance Is Not Staging Automation

Wound staging automation is where vendors get loose with the language. NPIAP staging covers six categories:

- Stage 1

- Stage 2

- Stage 3

- Stage 4

- Unstageable

- Deep tissue injury

(Use "pressure injury" rather than "pressure ulcer"; NPIAP retired the older term.) AI can surface the visual evidence (depth, tissue layers, slough coverage) pointing toward a stage. The clinician commits the answer. This isn't a vendor preference. CMS draws the same line for OASIS (Section 3). AI assists, clinician stages.

Predictive Analytics: Healing Velocity And Risk Of Amputation

This is where clinical decision support starts earning its keep. Tissue Analytics' Wound Healing Velocity Indicator predicts whether a wound is tracking to close within 4, 8, 12, or 16 weeks, drawing on millions of wound care episodes. The Risk of Amputation Indicator flags lower-extremity wounds heading toward limb loss. Both surface population-level patterns clinicians can't see across a single panel. Neither is autonomous diagnosis. A clinician reviews, factors in context, decides.

What's Still Demo-Ware

Treat the following with skepticism when a vendor walks them through:

- Autonomous staging. No FDA-cleared system stages a pressure injury without a clinician in the loop.

- Autonomous infection diagnosis. Color and edge changes flag risk. They don't diagnose. A platform marketing "AI infection detection" as a finding is overclaiming.

- AI-generated narrative notes that survive an audit. Generative drafts a clinician reviews are useful. Drop-in autonomous chart prose isn't defensible in an OCR audit, and digital wound assessment vendors selling it that way are setting customers up.

- Full automation of OASIS or MDS responses. CMS specifically prohibits this for OASIS-E2 (Section 3).

Validated tools that augment a clinician are real today. Tools that replace one are not. Read every demo through that filter.

Wound Care AI Apps Live Or Die On Nursing Workflow Integration

The technical capabilities in Section 1 are the easy part. The hard part is whether nurses actually use the thing on shift. Most wound app rollouts don't fail because the AI is wrong. They fail because the workflow asks for one extra tap nurses don't have time for.

The Real Documentation Burden Numbers

Nursing documentation burden is a measurable contributor to turnover, not just an HR talking point. Nurses spend 19% to 35% of every shift on EHR documentation, with about 23% across a 12-hour shift and 31.11% specifically in flowsheets. Inpatient nurse burnout sits above 50%. The national RN vacancy rate is 9.8%, and the 2025 NSI report puts the cost per RN departure at $61,000.

AMIA set a "25x5" goal in 2021 to cut clinician documentation burden by 75% by 2025. We're not on track. A wound care nursing app or any nursing efficiency tools investment has to be a real lever against these numbers, not a marginal improvement bolted on top.

Point-Of-Care UX: The Tap Count That Kills Adoption

Point-of-care documentation has constraints generic mobile UX guides don't cover. The nurse is wearing gloves, often holding the patient's limb with one hand. The camera path needs to be one tap from the chart, defaults predictable, buttons glove-friendly. Mandatory fields that don't match assessment flow get worked around.

Any new step slower than the old workflow's equivalent gets reverted. Nurses go back to paper or to terse free-text notes that defeat the purpose of installing a smart wound care application in the first place. Tap count is the metric to track during pilot, not feature parity with the spec.

Voice, Offline, And The Clinical Environments Most Demos Skip

Voice-to-text for narrative fields helps when it's reviewed before commit. When it isn't, autotranscribed notes become audit liability the next time OCR or a state surveyor walks through. The pattern:

- dictate

- review on-screen

- commit

Offline mode is non-negotiable in skilled nursing facilities and home health, where connectivity is intermittent and any wound care charting software requiring a live connection breaks the workflow. Swift Medical's mobile-first design syncs offline data automatically once reconnected. Demos run on hospital wifi and never show the SNF basement that's actually the deployment environment. Ask what happens when the radio drops.

Templates By Wound Type (Pressure Injury, Diabetic Foot, Surgical, Stasis, Arterial)

A real wound care workflow automation platform supports the templates, classification systems, and wound care protocols that map to the wound types nurses actually document. Wound dressing documentation, photo cadence, and assessment fields differ across each:

- Pressure injury. NPIAP staging (Stage 1 to 4, unstageable, deep tissue injury). Pressure injury prevention assessments tied to Braden scoring.

- Diabetic foot ulcer. Wagner classification or University of Texas system. Different fields, different vascular and neuropathic context.

- Venous stasis ulcer. CEAP classification. Different photo angles, different surrounding-skin emphasis.

- Arterial ulcer. Pulse and ABI documentation, different etiology entirely.

- Surgical wound. Post-operative assessment scale (POAS), different photo cadence, different infection criteria.

- Traumatic wound. Mechanism-of-injury fields, often urgent.

A platform that uses one generic wound template across all six isn't a clinical product. It's a database with a camera. Nurses notice immediately.

Why Most Rollouts Fail

Most wound care charting software rollouts we've seen fail in the same handful of ways. Real clinical workflow optimization starts before the build, not after bug reports:

- No clinical workflow analysis pre-build. The mismatch between spec and shift surfaces in week 2 of pilot.

- Mandatory fields that don't match real assessment flow. Nurses fake the data or skip the tool.

- No super-user pattern. Without a peer champion on each unit, interdisciplinary communication between nursing, wound care, and physical therapy quietly stops happening.

- No shadow rollout. Going straight from pilot to mandatory leaves no time to fix what pilot revealed.

- Care plan automation that overrides clinical judgment. Nurses ignore auto-generated plans they can't easily override.

- Change management treated as an afterthought. Add the cost to the project plan or watch the rollout reverse.

The same failure modes show up across nursing workflow optimization apps generally. Wound documentation is just where they bite hardest.

EHR Integration Is The Part Most Wound Care Apps Underestimate

EHR integration is where AI wound app projects we've seen consistently miss their dates by 2x to 3x. The technology is there. The compliance and identity layer wrapped around it is where weeks become quarters.

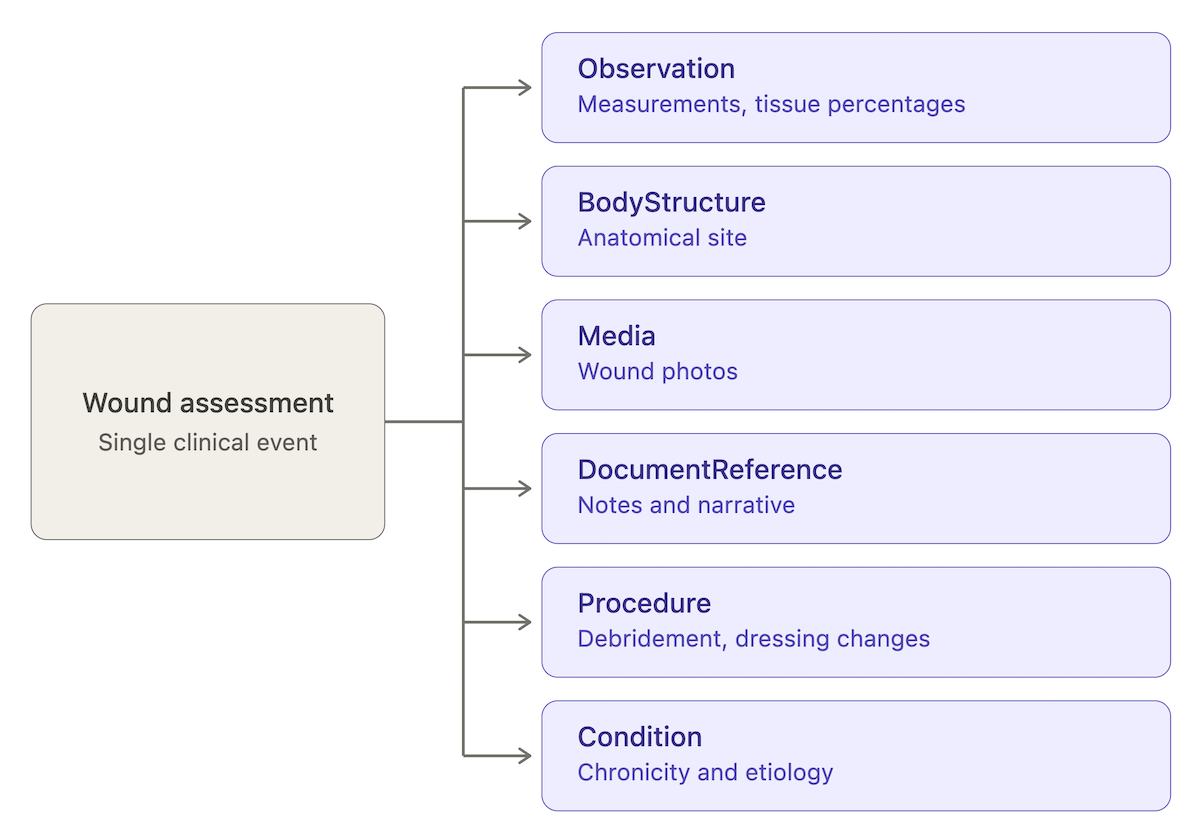

HL7 FHIR R4 Resources That Map To Wound Documentation

A wound assessment doesn't fit a single FHIR resource. It maps across half a dozen, and any AI wound charting system needs to write to all of them and read back coherently:

- Observation. Measurement values (length, width, area, depth) and tissue percentages.

- BodyStructure. Anatomical site (sacrum, left lateral malleolus, etc.).

- Media. Wound photos.

- DocumentReference. Assessment notes and narrative.

- Procedure. Debridement, dressing change, irrigation.

- Condition. Chronicity, etiology, comorbid context.

Tissue Analytics ships SMART on FHIR with Epic, Oracle Health Cerner, and Allscripts/Veradigm; a custom build needs the same coverage. Pressure ulcer documentation that lives only in DocumentReference loses every downstream reporting and analytics use.

SMART On FHIR And The Sandbox Timelines Nobody Shows In The Pitch

EHR integration timelines get underestimated by 2x to 3x in every wound app build we've audited. The cause isn't the spec. It's the integration program path.

Each major EHR vendor runs its own developer program with its own sandbox provisioning, OAuth scope approval, and security review:

- Epic Showroom (renamed from App Orchard)

- Oracle Health Cerner Code

- Meditech Greenfield

- Athenahealth Marketplace

- Veradigm (formerly Allscripts)

Sandbox provisioning takes weeks. OAuth scope approval and security review add months. By the time you've validated against test patient data, signed the integration agreement, and scheduled go-live with a customer's IT, the original 6-week estimate is 6 months. The EHR sandbox is where wound app projects go to die.

OASIS-E2 (Effective April 2026) And The CMS Rule That Constrains AI

OASIS-E2 went into effect April 1, 2026, and any home health AI wound app needs to know exactly what changed. The wound-relevant items:

- M1306, M1311, M1322, M1324 (pressure ulcer/injury staging)

- M1330, M1334 (stasis ulcer)

- M1340, M1342 (surgical wound)

The CMS guidance manual draws a line aimed specifically at AI: "An agency's software may not 'answer' or 'generate' the OASIS response for the assessing clinician."

The implication for wound assessment protocols is direct. AI surfaces evidence (measurement values, tissue percentages, prior comparisons) and pre-fills measurement fields. The clinician commits the OASIS response. A workflow that auto-populates the response itself, even from an AI confident in the answer, violates the rule. Build the human-in-the-loop step into the architecture, not the training docs.

MDS 3.0 Section M For Skilled Nursing Facilities

In SNFs, MDS 3.0 Section M captures pressure injury staging, present-on-admission status, and healing trajectory. It feeds directly into SNF Value-Based Purchasing exposure, which means miscoded staging affects facility payment, not just chart accuracy. Wound care reporting from an AI-assisted workflow has to mirror Section M's field structure exactly. The same human-commit rule applies as OASIS: AI assists, the assessing clinician answers. Drainage documentation, exudate volume, and tissue type all roll up into the staging answer that drives the payment math.

Wound Care Registries And Quality Reporting

Two registries matter for wound care standards and quality measure reporting: the U.S. Wound Registry (USWR) and the Alliance of Wound Care Stakeholders (AWCS). USWR captures structured wound data for MIPS quality measure reporting and benchmarking against national outcomes. IPPS-tied measures for hospital-acquired pressure injuries route through the same general path. A wound app aimed at a hospital or wound center customer should plan for registry submission as part of the export path, not a v2 feature.

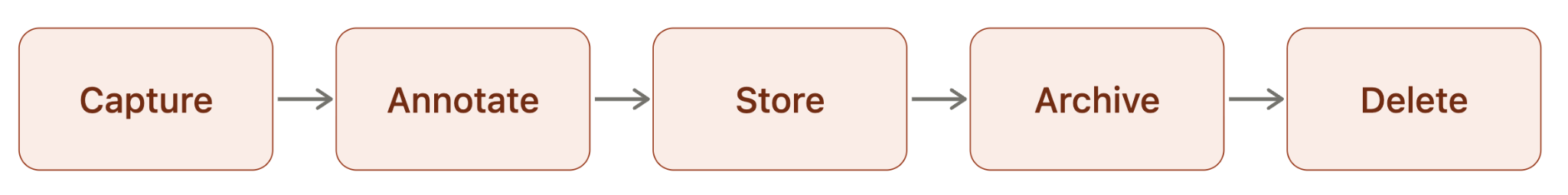

Photography Is The Compliance Edge Case Most Wound Care Documentation Solutions Skip

Wound photography sits in a compliance class most healthcare app teams haven't engaged with. Generic HIPAA framing doesn't get you there. The image lifecycle, clinical photography integration with the chart, FDA classification when AI makes clinical claims, and procurement-grade certifications are where wound care compliance actually lives.

Wound Photos Are PHI. The Standard HIPAA Framing Doesn't Engage With This.

A wound photo is PHI. Identifiable body region plus diagnosis context, every time. The standard HIPAA compliant healthcare apps framing for HIPAA wound documentation covers auth, encryption at rest, BAA scope, and audit logging. None of that engages with the image-specific lifecycle:

- Capture. Camera roll, in-app encrypted store, or both?

- Annotate. Markup, measurement overlays, fiducial calibration before commit to chart.

- Store. Image location, region of residency, encryption keys, subprocessor chain.

- Archive. Retention policy by wound state (active, healed, deceased patient).

- Delete. Cascade deletion on consent revocation, patient rights requests, retention expiry.

OCR has fined providers for photos in unauthorized cloud storage, lost devices with cached images, photos forwarded to personal email, and screenshot artifacts in shared drives. Clinical wound documentation that skips a link in the lifecycle leaves a gap the audit will find.

Consent For Wound Photography: Separate, Documented, Auditable

Wound photography consent should be separate from the general HIPAA acknowledgment. Patients agree to wound photos for clinical care; that's not the same as consenting to use in AI training data, research, or marketing. Wound photography standards recommend this posture, and most teams skip it anyway. A defensible consent flow has three properties:

- Scope is explicit. Each downstream use is named, not buried under "operations."

- Revocation is operational. A patient revoke cascades deletion across all stores and any AI training datasets. Not a manual ticket. A cascade.

- The record is auditable. Timestamped, versioned, retrievable on demand. A 2024 consent form may not match what your platform does in 2026.

Audit conversations turn on the third one.

FDA SaMD Classification When AI Does Measurement Or Staging

When an AI wound app makes clinical claims (measurement, staging, healing prediction), it's Software as a Medical Device. Class II 510(k) is the typical pathway. The FDA cleared 295 AI-enabled medical devices in 2025, a record per FDA's published list, with 62% SaMD and 63% diagnostic.

Predicate strategy matters. Tissue Analytics' FDA Breakthrough status and Swift Medical's and eKare's clearances all serve as predicates a new entrant can cite to compress submission time. Without a predicate, you're in De Novo territory, which adds 6 to 12 months. Picking a predicate before writing the clinical evaluation plan is the difference between a 12-month and a 24-month path.

PCCP And Why It Changes Post-Market AI Updates

Two FDA guidances reshape AI wound app submissions in 2026. The AI-enabled DSF draft guidance (January 7, 2025) frames the Total Product Life Cycle approach: model description, data lineage, performance tied to the cleared claim, bias analysis, and human-AI workflow are all expected submission elements.

The PCCP final guidance (December 2024) is the bigger operational change. A Predetermined Change Control Plan lets you pre-specify algorithm update controls (retraining cadence, validation thresholds, deployment guardrails) at submission. With a PCCP in place, a model retrain inside the pre-specified envelope doesn't require a new submission. Without one, every meaningful update routes through a fresh 510(k). For continuous-improvement AI products, that's the difference between shipping monthly and shipping yearly.

HITRUST And SOC 2 Type II: What They Buy You In Procurement

HITRUST and SOC 2 Type II aren't legal substitutes for HIPAA. They're increasingly required for hospital procurement. Swift Medical's HITRUST-aligned and SOC 2 Type II posture is the working example: hospital security review teams accept those certifications as signal that institutional controls are in place.

For automated wound documentation platforms aiming at hospitals, plan for HITRUST as a 9-to-12-month effort and budget item, not a v3 nice-to-have. The honest take on regulatory compliance: "HIPAA-compliant" is marketing language. "Defensible in an audit" and "passes hospital security review" are the actual procurement and patient safety metrics. Subprocessor chains, BAA scope, image storage location, and retention policy are the line items reviewers ask about.

The ROI Math For Wound Care AI Applications That Actually Closes Deals

Most ROI claims for AI wound apps don't survive procurement scrutiny. The headline numbers are real. The conditions attached to them are usually missing from the deck. The math that holds up looks like this.

The 60% To 79% Charting Time Reduction Claim, Pressure-Tested

Swift Medical claims wound documentation up to 79% faster than manual. We'd urge buyers to read that as a vendor maximum, not a budget input. Peer-reviewed work on plain workflow redesign without AI showed 18.5% EHR time reduction with 1.5 to 6.5 minutes saved per reassessment per patient (PMC 9300261, Implementing Best Practices study). The honest framing for any wound care documentation app evaluation: separate the "AI uplift" from the "any modernization uplift." Both are real. The marginal AI contribution isn't 79%.

A defensible number to plan around is 30% to 50% reduction in nursing time on wound documentation tasks once the workflow is integrated, with the upper end available when the app replaces a particularly broken manual flow. Still material when mapped across an FTE pool.

Accuracy Gains And Why They Translate To Reimbursement

Wound documentation automation pays back twice: nursing time savings and miscoding avoided. AI measurement hits an inter-class correlation of 0.97 to 1.00 across raters versus 0.92 to 0.97 for ruler, and manual measurement overestimates wound area by up to 40%. Both tails matter. Over-measurement leads to over-debridement and over-resourced care. Under-measurement understates severity and risks misstaging.

Misstaging is the reimbursement problem. MDS 3.0 Section M and OASIS-E2 wound items both feed payment math. A Stage 3 documented as Stage 2 affects reimbursement on one side and patient safety on the other. Wound healing documentation that holds up under chart review protects both. The same documentation feeds healing time tracking and wound healing progression analytics that prove care quality visit over visit.

Wound Prevalence Reduction And CMS Payment Implications

Swift Medical reports a 43% reduction in wound prevalence in skilled nursing facility deployments. That's a vendor claim, and any prevalence number's methodology is worth scrutiny. The downstream payment math is independent of the source.

Three CMS programs make wound prevalence directly financial:

- HACRP (Hospital-Acquired Condition Reduction Program). Hospital-acquired pressure injuries (HAPIs) feed the score. Bottom-quartile hospitals take a 1% IPPS payment reduction across all Medicare admissions for the year.

- SNF VBP (Value-Based Purchasing). SNF performance on quality measures driven by MDS 3.0 Section M data affects payment. Pressure injury indicators are part of the bundle.

- MIPS quality reporting. Wound care quality metrics and chronic wound management measures route through MIPS for eligible clinicians.

A facility cutting HAPI rates moves real dollars. Wound care outcomes improve in the same motion. That's the deal-closing number, not the documentation-time savings.

Staff Retention Math

Documentation burden is a measurable contributor to nurse burnout, which sits above 50% in inpatient settings. With a national 9.8% RN vacancy rate and $61,000 cost per RN departure (2025 NSI report), retention math becomes a budget conversation. A wound tracking software platform that gives a unit nurse back 30 minutes per shift on documentation translates into measurable annual capacity recovery, not just sentiment improvement.

This is the part of the ROI story most vendor decks underweight. Time savings that map to retention math are durable. Quality improvement initiatives tied to documentation typically aren't.

Build Vs Buy: When Does Custom Make Financial Sense

The chronic wounds market sits at 6.5 million Americans and 1% to 2% of the global population, so the build-vs-buy answer isn't universal.

Buy (Tissue Analytics, Swift Medical, eKare) when:

- Your use case maps to a validated platform's capabilities.

- A 510(k) predicate already exists in the platform's lineage.

- Per-seat licensing fits your unit economics.

- You need go-live in months, not quarters.

Build a custom wound care documentation app when:

- The workflow is proprietary or multi-condition (diabetic wound tracking, acute wound documentation, and post-surgical wound tracking under one shell).

- You want raw-data ownership for downstream ML training.

- Equity ownership and code as an asset matter to your business model.

- You expect the workflow to drift outside what off-the-shelf platforms cover.

The honest take: 60% to 79% headline charting time reductions are achievable, conditional on workflow integration. Without integration, the AI saves nothing because nurses don't use it.

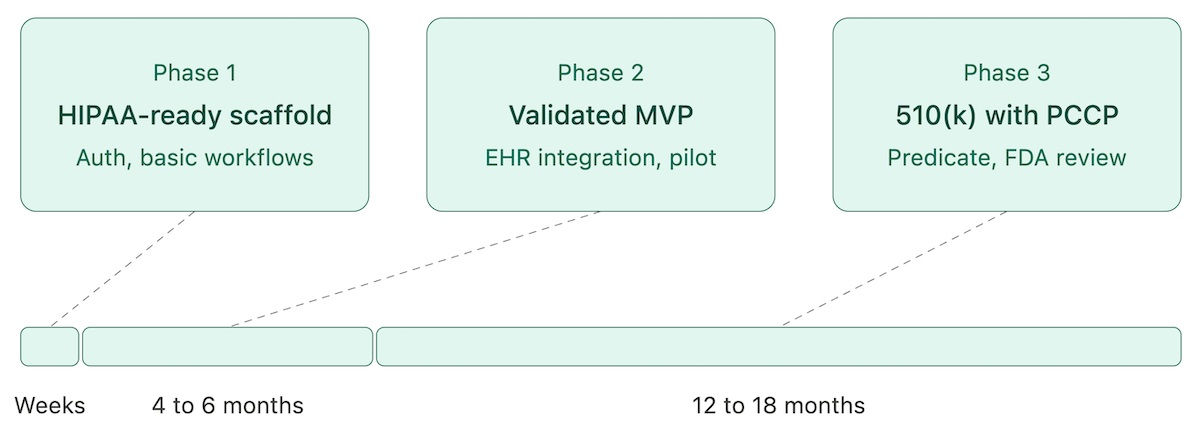

Wound Care Documentation App Development: Real Timelines, Team Composition, And Cost Ranges

The back half of a wound care mobile app development project is where it breaks. The HIPAA-ready scaffold, auth, and basic workflows: weeks. The clinical validation, EHR integration, and FDA pathway: most of the budget and most of the calendar.

Architecture: Mobile-First, Edge Vs Cloud Inference

Mobile-first iOS and Android. iOS gets LiDAR access on iPhone Pro models, which matters for depth-aware wound assessment app development.

The architectural decision that drives the rest:

- On-device inference (Core ML, TensorFlow Lite, ONNX Runtime). Lower latency, no PHI leaves the device, harder to update models centrally.

- Cloud inference. Faster model iteration, harder PHI containment, latency dependent on network.

Most production mobile wound care solutions run AI image recognition on-device for patient-facing capture and use cloud for non-PHI aggregation, model improvement, and clinician dashboards. Image storage encrypted at rest with regional residency. A FHIR middleware layer fronts the EHR sync so the app isn't entangled with a single EHR's quirks.

Team Composition For A Clinical-Grade Build

A clinical-grade wound assessment app development team typically runs 5 to 9 people:

- Nurse or wound care specialist advisor. Often missing from early teams. Costs the project months when it's not there.

- Clinical informaticist. FHIR resource modeling, EHR integration design, terminology mapping.

- ML engineer for vision models. Trains and validates automated wound classification and machine learning wound prediction models.

- Mobile engineers (iOS and Android). LiDAR access and platform-specific capture quirks justify dedicated specialists.

- Backend engineer. FHIR server, auth, data layer, audit logging.

- QA with healthcare experience. Generic QA misses HIPAA edge cases.

- Regulatory consultant. When the app pursues 510(k), this hire happens early or it doesn't help.

Realistic Timeline Phases

Three phases that map to actual ship-dates:

- Phase 1: HIPAA-ready scaffold (weeks). Auth, basic workflows, evidence-based wound care templates, sandboxed deployment. This is where a tool like Specode collapses what used to be a 2-month effort.

- Phase 2: Clinically-validated MVP without FDA submission (4 to 6 months). EHR integration, clinical pilot, super-user training, workflow refinement. Tight scope, single use case.

- Phase 3: 510(k) clearance with PCCP (12 to 18 months). Required when the app makes clinical claims. Predicate selection, clinical evaluation, submission, FDA review cycles.

Skip the validation step and the rollout reverses inside a quarter.

Cost Ranges And What's Usually Missing From The Estimate

Industry benchmarks for a clinical-grade wound care mobile app development project run $300,000 to $800,000. That number usually doesn't include:

- HIPAA-compliant infrastructure. $10,000 to $30,000 per year baseline.

- FDA submission with regulatory consultancy. $50,000 to $150,000 external, on top of internal team time.

- Clinical validation studies. Variable, but a single-site IRB-reviewed study runs into six figures.

- EHR integration. Underestimated by 2x to 3x in nearly every project we've seen.

Pilot strategy matters as much as budget. Shadow rollout first, super-user pattern, measurable success criteria. The same failure patterns show up across healthcare app development generally and bite hardest in wound app builds.

The honest take: AI handles the scaffold. The expensive 70% is the back half.

How Specode Builds AI Wound Care Technology That Ships To Production

Specode is an AI-assisted healthcare app builder. We help teams ship the parts of a wound app that fit our stack, and we say plainly which parts don't.

What Specode Builds (And What's Out Of Scope)

In scope:

- HIPAA-ready scaffold with auth and BAA-backed hosting.

- Custom data model, screens, workflows, role permissions.

- EHR integration patterns via FHIR.

- HIPAA Compliance Agent: a multi-agent scanner that flags issues by Critical, High, Medium, and Low severity in 3 to 4 minutes.

- Full code export at any time, no vendor lock-in.

Out of scope:

- Custom computer vision model training for wound measurement, tissue analysis, or staging.

- FDA 510(k) submission and clinical validation studies.

- NPIAP-specific staging logic that requires clinical product judgment.

These need partner work or in-house clinical and regulatory hires. We say so up front.

Talk To Us

The build path differs sharply between a wound app for an existing EHR-integrated provider workflow and a consumer-facing wound tracker with no EHR sync. A discovery call is faster than any spec doc for figuring out which one applies. Bring the use case, the deployment context (hospital, SNF, home health, consumer), and any FDA pathway thinking. We'll tell you what it takes to build a wound care app on this stack and what it takes outside of it.

Frequently asked questions

AI wound measurement hits an inter-class correlation of 0.97 to 1.00 across raters versus 0.92 to 0.97 for ruler-based methods. Manual measurement overestimates wound area by up to 40%.

Pressure injuries, diabetic foot ulcers, venous stasis ulcers, arterial ulcers, surgical wounds, traumatic wounds, and chronic wounds. Each uses a different classification system (NPIAP, Wagner, CEAP, POAS) and assessment fields.

Integration runs through SMART on FHIR. Tissue Analytics ships with Epic, Oracle Health Cerner, and Allscripts/Veradigm. Plan for 2x to 3x your initial integration timeline estimate.

HIPAA, state nursing board documentation rules, FDA SaMD classification (typically Class II 510(k)) when making clinical claims, and CMS-specific requirements like OASIS-E2 and MDS 3.0 Section M.

Adoption works when the new workflow saves time within the first shift. It fails when any new step is slower than the old one. The super-user pattern matters.

Documentation-time savings show in weeks. Reimbursement uplift, prevalence reduction, and retention math show in 6 to 12 months. The deal-closing numbers are the longer ones.

AI flags peri-wound color changes, edge irregularities, and tissue composition shifts that warrant clinical attention. It doesn't diagnose infection. Treat the flag as triage, not finding.