Vibe Coding a Healthcare App? Here's What Nobody Tells You About PHI

Last weekend, a solo founder spun up a therapy journaling app in 48 hours using Cursor and GPT-4. Sleek UI, working backend, cloud-synced notes — ready for beta testers by Monday. There was just one problem: every journal entry contained protected health information, and not a single line of that AI-generated code knew it.

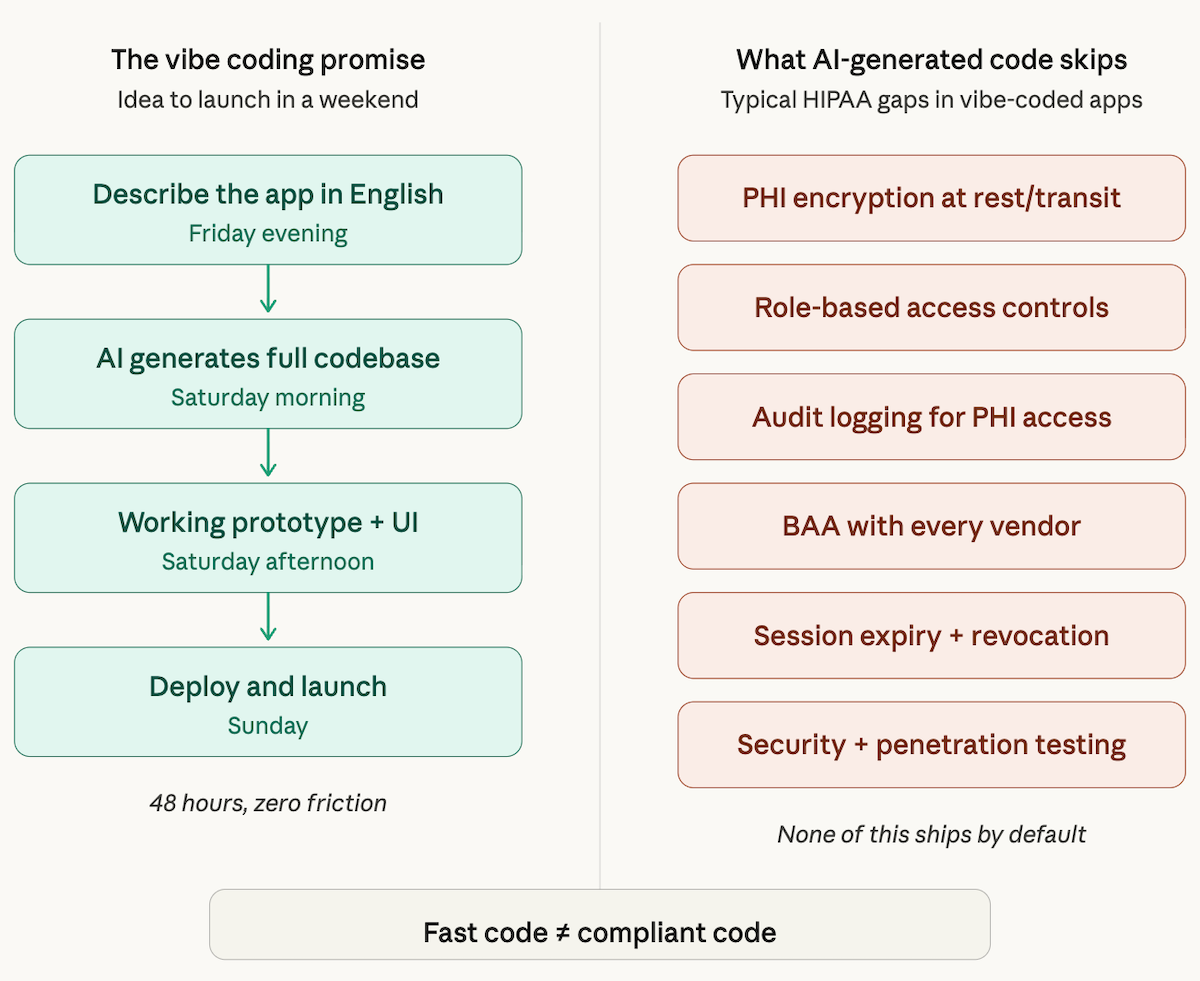

This is the tension at the heart of the vibe coding healthcare app movement. The speed is real. The creative momentum is real. But the compliance gaps hiding in that auto-generated codebase? Those are real too — and they come with fines that start at $100 per violation and scale to $2 million per category, per year.

This article isn't here to kill your excitement about rapid app development in healthcare. It's here to make sure your healthcare app launch doesn't end with an OCR investigation instead of a Product Hunt feature.

Can you vibe code a HIPAA-compliant healthcare app?

Yes, but not with generic AI coding tools alone. Standard AI code generators have no awareness that your data may be PHI — they won't add encryption, audit logging, or access controls unless explicitly prompted. To ship safely, you either need to manually layer compliance onto every line of AI-generated code, or build on a platform like Specode that has HIPAA-ready infrastructure and PHI-aware data models baked in from the start.

Key takeaways:

- Your app probably handles PHI and you might not realize it. If any health-related data links to a name, email, device ID, or appointment date, it's PHI — and HIPAA applies to your entire codebase that touches it.

- AI code generators create compliant-looking code that isn't. Expect missing encryption, no audit logs, hallucinated security functions, and zero BAA coverage — all hidden behind code that passes a demo but fails an audit.

- You can still move fast — with the right foundation. Purpose-built healthcare AI platforms give you vibe-coding speed without the compliance debt that takes months to unwind.

What Is Vibe Coding? (And Why Healthcare Devs Are Paying Attention)

Vibe coding is the practice of building software by describing what you want in natural language and letting an AI code generation tool — Cursor, GitHub Copilot, Replit AI, Claude, or similar — write the implementation. You focus on product vision and UX flow; the AI handles the boilerplate, business logic, and even database schemas.

For healthcare developers, the appeal is obvious. Working with a traditional healthcare app development company typically means development cycles of 6–18 months. A vibe coding medical app approach can compress that to days or weeks. You go from napkin sketch to working prototype before your compliance officer finishes reading the first paragraph of the HIPAA Security Rule.

That compression is why founders building everything from symptom trackers to telehealth platforms are experimenting with AI-assisted workflows. And for non-regulated features — onboarding flows, marketing pages, scheduling UIs — it works beautifully. The danger starts when that same fast-and-loose workflow touches data that falls under federal regulation.

What Is PHI — and Why It's Not Just a Checkbox

Protected health information (PHI) is any individually identifiable health information created, received, maintained, or transmitted by a covered entity or its business associates. That definition is broader than most founders expect.

PHI isn't limited to diagnosis codes and lab results. Under HIPAA, there are 18 specific identifiers that, when linked to health information, make data PHI. These include names, dates (birth, admission, discharge), phone numbers, email addresses, Social Security numbers, medical record numbers, device identifiers, biometric data, and even full-face photographs.

Here's where it gets tricky for app builders — and where vibe coding PHI mistakes are most common: your app doesn't need to look like an electronic health records system to handle PHI. A mood-tracking app that stores a user's name alongside their anxiety scores? PHI. An appointment reminder tool that logs patient data with visit dates? PHI. A clinical data dashboard that pulls from an EHR API? Definitely PHI.

The distinction between PHI and de-identified data is critical but deceptively hard to implement. The Safe Harbor method requires stripping all 18 identifiers — and LLMs frequently get this wrong, leaving fragments of identifying information in supposedly anonymized datasets. If your app touches anything that connects a person's identity to their health status, you're in PHI territory, and every line of code that handles that data needs to reflect it.

The Hidden Risks of Vibe Coding a Healthcare App

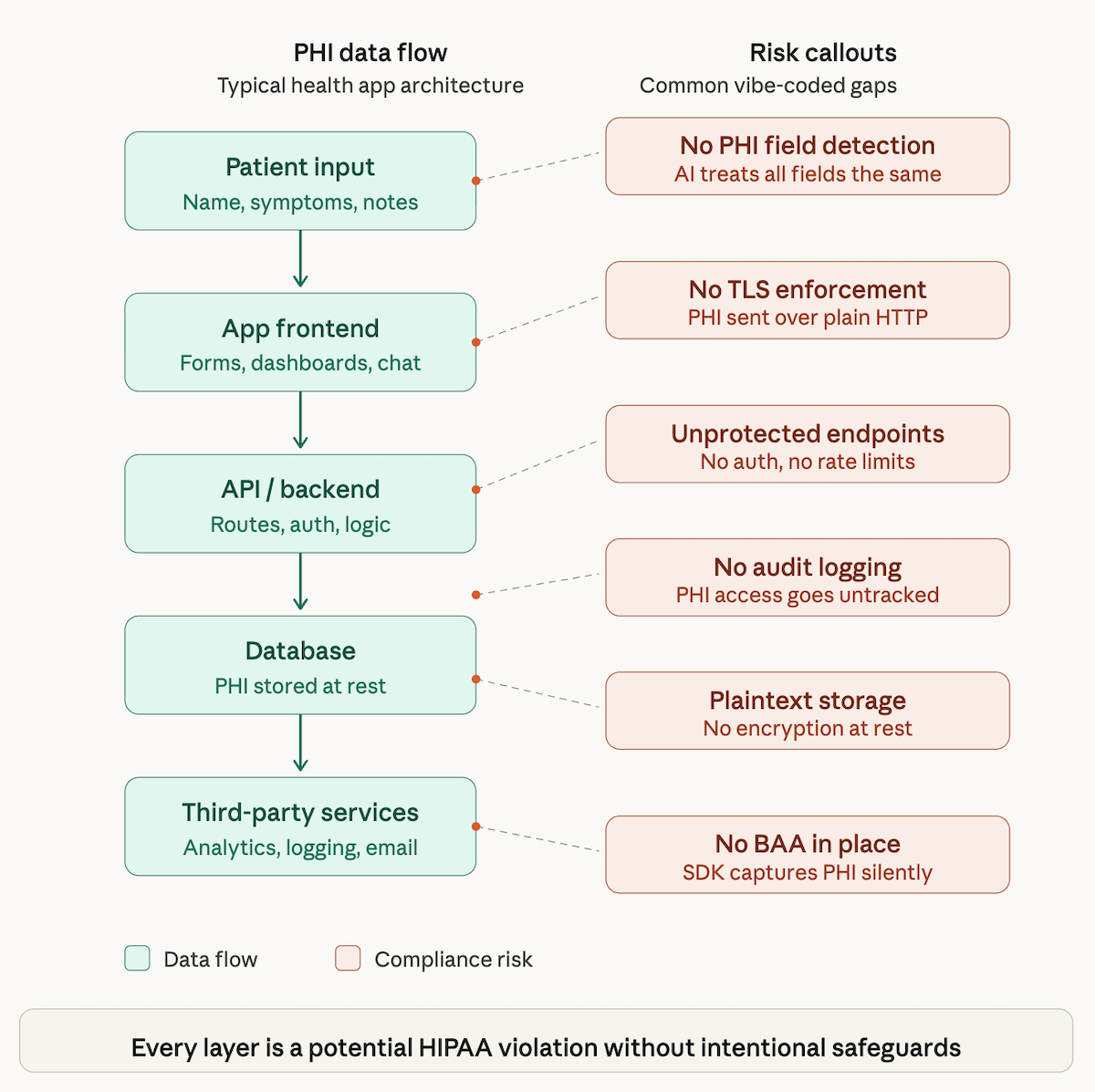

Here's the core problem: AI code generators are trained on millions of general-purpose repositories. They're excellent at producing functional code. They have zero awareness that a database field called user_notes might contain PHI, or that a field named appointment_date linked to a patient_id creates a regulated data set.

This blind spot produces a cascade of vibe coding health app risks that most builders don't catch until it's too late.

No Encryption by Default

LLM-generated code routinely stores data in plaintext — locally, in transit, or both. Data encryption at rest and in transit isn't a nice-to-have in healthcare; it's a baseline requirement. Yet generic AI tools almost never implement AES-256 encryption for stored PHI or enforce TLS 1.2+ for data transmission unless you explicitly and precisely prompt for it.

Missing Audit Logs

HIPAA requires that you track who accessed what PHI, when, and what they did with it. Audit logs are almost never produced by AI code generators unprompted. Your vibe-coded app might have beautiful CRUD operations, but if there's no immutable access trail, you've got a compliance gap that an auditor will find in minutes.

No Business Associate Agreement Coverage

This is the one that catches even experienced builders off guard. If you're using an AI tool to process, view, or generate code that contains actual PHI — say, pasting patient data into a prompt to debug an issue — you may need a business associate agreement (BAA) with that tool provider. Most vibe coding tools (Cursor, Copilot, Replit) do not offer a BAA. Using them to process PHI may itself constitute a HIPAA violation.

Insecure API Endpoints

AI-generated backends frequently expose endpoints without proper authentication, rate limiting, or role-based access. A single unprotected /api/patients route is a data breach waiting to happen.

Hallucinated Security Features

Perhaps the most dangerous risk: AI hallucinations in code. An LLM might generate a function called encryptPHI() that looks legitimate but implements a broken or non-standard encryption method. Security vulnerabilities wrapped in confident-looking code are harder to catch than obviously missing features.

The cumulative effect of these gaps turns data security from a feature into a liability. Each one is fixable in isolation, but LLM-generated code tends to produce them all simultaneously — and founders who aren't looking for them won't find them.

What HIPAA Actually Requires from Your Code

HIPAA compliance isn't a single certification you purchase. It's a set of administrative, physical, and technical safeguards that your application must implement and maintain. For vibe coding HIPAA compliance, the technical safeguards are where the rubber meets the road.

Here's what the Security Rule actually demands at the code level — and how it compares to what AI tools typically produce:

Access Controls

Every user must have a unique identifier. Access to PHI must be restricted based on role. Your app needs mechanisms for emergency access and automatic logoff. Vibe-coded apps typically implement basic username/password auth and call it done — no role-based permissions, no session expiry, no principle of least privilege.

Audit Controls

Your system must record and examine activity in information systems that contain or use PHI. This means comprehensive logging of every read, write, update, and delete operation on regulated data, with tamper-evident storage. Generic AI output gives you console.log("user logged in") at best.

Integrity Controls

You must implement mechanisms to authenticate electronic PHI and ensure it hasn't been improperly altered or destroyed. This goes beyond database backups — it includes checksums, versioning, and validation logic that vibe-coded apps almost never include.

Transmission Security

All PHI transmitted over a network must be encrypted. This means TLS for APIs, encrypted WebSocket connections, and secure file transfer protocols. AI-generated code often defaults to HTTP in development and never switches to HTTPS, or implements SSL without certificate pinning.

Meeting healthcare regulations at this level requires intentional architecture, not incidental code generation. The gap between what regulatory compliance demands and what a general-purpose AI produces is where expensive mistakes live.

Real Scenarios Where Vibe-Coded Health Apps Go Wrong

These scenarios are composites drawn from common patterns where AI-generated healthcare app compliance falls apart in practice. They illustrate how small oversights create serious patient privacy violations.

Scenario 1: The Chatbot That Logged Everything

A founder built a mental health chatbot using an AI coding assistant. The app worked well — empathetic responses, session history, mood tracking. But the AI-generated logging middleware captured full request and response bodies, including user messages describing symptoms, medications, and suicidal ideation. Those logs were stored in plaintext on a third-party logging service with no BAA. Every conversation was a potential data breach.

Scenario 2: The Analytics SDK That Phoned Home

A wellness app integrated a popular analytics SDK on the AI tool's recommendation. The SDK captured screen names, user interactions, and device identifiers — standard for a consumer app, disastrous for one displaying lab results. The analytics provider received PHI it never signed up to protect, and the developer had created an unauthorized disclosure without realizing it.

Scenario 3: The "Encrypted" Database That Wasn't

An AI tool generated a database layer with a function named encryptField(). On inspection, it was Base64 encoding — not encryption. Every "encrypted" patient record was trivially decodable. The founder had tested the app, seen the scrambled-looking output, and assumed it was secure. It passed a demo. It wouldn't pass an audit.

Scenario 4: The Session That Never Expired

A telehealth app's AI-generated auth system issued JWTs with no expiration. A stolen token meant permanent access to a patient's medical history, prescriptions, and provider notes. No session timeout, no token refresh, no revocation mechanism — just an open door. This is a textbook failure that HIPAA's access control requirements exist to prevent, and one that GDPR would also flag for any users in the EU.

Each of these scenarios started with a working app that looked production-ready. None of them were.

Can You Vibe Code a HIPAA-Compliant App? (The Honest Answer)

Yes — with significant caveats. HIPAA compliant AI app development is possible, but it requires treating AI-generated code as a first draft, not a finished product.

Raw LLM output is not HIPAA-ready by default. No general-purpose AI tool has been trained to prioritize healthcare software development compliance over functionality. But if you layer the right processes on top, you can preserve much of the speed advantage while closing the compliance gaps.

What you'd need to add manually: a thorough code review process focused specifically on PHI handling, security testing that includes both automated scanning and manual penetration testing, proper infrastructure configuration (encryption, access controls, logging), BAA coverage for every vendor in your stack, and ongoing monitoring.

The honest math: by the time you've added these layers, you've spent 60–80% of the time you "saved" by vibe coding. You're still ahead of a pure waterfall approach, but the margin is thinner than the initial weekend prototype suggests. For healthcare developers, the question isn't whether AI can help — it's whether you're using an AI tool that understands the domain you're building in.

How Specode's AI Builder Solves This

This is where the distinction between generic AI coding tools and a purpose-built HIPAA-Compliant app builder healthcare compliance platform matters.

Specode is an AI builder designed specifically for healthcare. It gives you the creative speed of vibe coding with compliance guardrails engineered into the foundation — not bolted on after the fact. Think of it as vibe coding with a compliance co-pilot.

Here's what that means in practice:

HIPAA-ready infrastructure from day one. Every app built on Specode runs on infrastructure that meets HIPAA technical safeguard requirements out of the box. You don't configure encryption — it's the default. You don't remember to enforce TLS — it's mandatory. The Specode AI builder handles the security by design decisions that generic tools leave to you.

PHI-aware data models. When you define a data field in Specode, the platform understands the difference between a marketing email and a patient record. Data models are built with PHI classification awareness, so regulated data gets the protections it needs automatically. This is a fundamental shift from general-purpose AI tools that treat all data identically.

BAA-covered stack. Specode provides a BAA as part of its platform offering. Your entire build pipeline — from data modeling to deployment — is covered. No more wondering whether your AI coding tool's terms of service leave you exposed.

Built-in compliance intelligence. Specode is developing a dedicated HIPAA agent that reviews your app's code and flags compliance risks as you build — think of it as a security-focused co-pilot that catches what generic AI tools miss entirely. Before any Specode app goes live, the Specode team provides a comprehensive HIPAA analysis, so you're not shipping blind and hoping for the best. And when it comes to audit logging, Specode gives you the tools to build it right: customers use Specode's AI to create custom audit logging functionality tailored to their app's specific data flows and access patterns.

Whether you're building a telehealth app development project, a symptom tracker app, or a patient engagement tool, Specode lets you move fast without the compliance debt.

For teams that need custom healthcare software development capabilities beyond what any no-code healthcare app platform offers — but don't want to start from scratch — Specode occupies a unique middle ground: a low-code health app environment with healthcare-grade compliance built into every layer.

Checklist: Before You Ship a Vibe-Coded Health App

Use this checklist to audit any AI-generated healthcare codebase before it touches real patient data. If you're still figuring out how to build a healthcare app the right way, start here. Healthcare app PHI compliance isn't optional — it's the price of admission.

- PHI identification audit. Have you mapped every data field that could contain or link to PHI? Including notes, timestamps, and metadata?

- Encryption verification. Is all PHI encrypted at rest (AES-256 or equivalent) and in transit (TLS 1.2+)? Verify — don't trust function names.

- Access control review. Does your app enforce role-based access, unique user IDs, automatic session expiry, and emergency access procedures?

- Audit log implementation. Are all PHI access events (read, write, update, delete) logged immutably with timestamps and user identification?

- BAA coverage check. Do you have a signed BAA with every vendor that touches PHI — including your hosting provider, database service, email sender, and analytics tools?

- API security assessment. Are all endpoints authenticated, rate-limited, and scoped to minimum necessary data? Run a penetration test.

- Third-party SDK audit. Do any integrated SDKs or libraries capture, transmit, or store PHI? Check their data collection policies line by line.

- Session management validation. Do tokens expire? Is there a revocation mechanism? Are sessions terminated on inactivity?

- De-identification verification. If you claim data is de-identified, have you confirmed removal of all 18 HIPAA identifiers using the Safe Harbor method?

- Incident response plan. Do you have a documented process for breach notification within the 60-day HIPAA window?

If checking every box feels overwhelming, that's the point. Get started with Specode and get most of these handled by default.

Build Fast, Ship Safe

Using AI to build a healthcare app is not inherently risky — building one without understanding where the compliance boundaries are is. The founders who succeed in this space aren't the ones who avoid AI tools; they're the ones who choose AI tools built for healthcare.

Vibe coding changed how fast we can go from idea to prototype. Platforms like Specode change whether that prototype can actually ship. Healthcare app development doesn't have to be slow, but it does have to be safe.

The best path forward: keep the creative momentum that makes vibe coding powerful, and pair it with a platform that knows what PHI is before you have to explain it. That's the real unlock — building healthcare app with AI speed and healthcare-grade safety at the same time.

Frequently asked questions

Vibe coding can be part of a safe healthcare development workflow, but only with proper safeguards. Generic AI code generators produce functional code that typically lacks the encryption, access controls, audit logging, and PHI handling that healthcare regulations require. The code needs thorough review and hardening before it's safe for production use with patient data.

No. AI-generated healthcare app compliance is not a default output of any general-purpose coding tool. LLMs optimize for functionality, not regulatory compliance. HIPAA's technical safeguards — encryption, audit controls, access management, transmission security — must be explicitly implemented and verified, regardless of how the initial code was produced.

PHI is any individually identifiable health information handled by a covered entity or business associate. If your app collects a person's name, email, or device ID alongside any health-related data — mood scores, appointment times, medication logs, symptom notes — you are likely handling PHI. The threshold is lower than most founders assume.

HIPAA's Security Rule mandates four categories of technical safeguards: access controls (unique user IDs, role-based permissions, auto-logoff), audit controls (logging all PHI access and modifications), integrity controls (mechanisms to prevent unauthorized alteration of PHI), and transmission security (encryption of PHI in transit). Each must be implemented, tested, and documented.

If you input actual PHI into the AI tool — for example, pasting real patient data into a prompt for debugging — then yes, you likely need a BAA with that provider. Most popular AI coding tools (Cursor, Copilot, Replit) do not currently offer BAAs. The safest practice is to never input real PHI into any tool that isn't covered by a signed BAA.

Specode is purpose-built for healthcare app development. Unlike general-purpose AI coding tools, it provides HIPAA-ready infrastructure, PHI-aware data models, and a BAA-covered stack as part of the platform. Where generic tools leave compliance as an afterthought, Specode treats it as a foundation — so you get the speed of AI-assisted building without inheriting a compliance debt that takes months to resolve.