Is Cursor HIPAA Compliant?

Cursor has quickly become one of the most popular AI coding assistants on the market, adopted by 64% of Fortune 500 companies and valued at over $29 billion as of 2025. If you're building healthcare software — or even maintaining it — there's a decent chance Cursor is already part of your workflow.

But here's the question that keeps coming up in healthtech Slack channels and founder circles: is Cursor HIPAA compliant?

It's not an academic question. If your code touches protected health information (PHI) at any point in the development lifecycle, the tools in your workflow are subject to HIPAA's requirements — not just the app you ship to patients. And the consequences of getting this wrong range from $141 to over $2 million per violation.

This article breaks down exactly what Cursor does with your code, whether it offers the compliance infrastructure healthcare teams need, and what alternatives exist when you need an AI coding assistant HIPAA-ready from day one.

Quick question: Is Cursor HIPAA compliant?

Quick answer: No. Cursor does not offer a Business Associate Agreement (BAA) on any plan tier, and all code is transmitted to cloud infrastructure that isn't covered for PHI handling. Privacy Mode protects intellectual property, not regulated health data. Healthcare teams building with PHI in scope need a platform with BAA coverage built in.

Key Takeaways:

- Cursor has no BAA and no HIPAA compliance path. Code is sent to Cursor's servers and routed through multiple subprocessors — none covered for PHI. Privacy Mode reduces data retention but doesn't address HIPAA's requirements for audit trails, technical safeguards, or business associate agreements.

- HIPAA applies to your dev tools, not just your production app. If PHI can enter your codebase through test data, code comments, prompts, or debugging sessions, every tool in that workflow becomes a business associate — including your AI code editor.

- You don't need to be a developer to build compliant healthcare apps. Platforms like Specode let clinician-founders and non-technical operators describe what they need in plain language, and the AI builds it on a HIPAA-ready foundation — with a BAA included on the Pro plan.

What Is Cursor?

Cursor is an AI-first code editor built on Visual Studio Code, developed by Anysphere, Inc. It's not just an autocomplete plugin — it's a full IDE replacement that uses large language models for LLM code generation, chat-based debugging, multi-file editing, and codebase-wide context awareness.

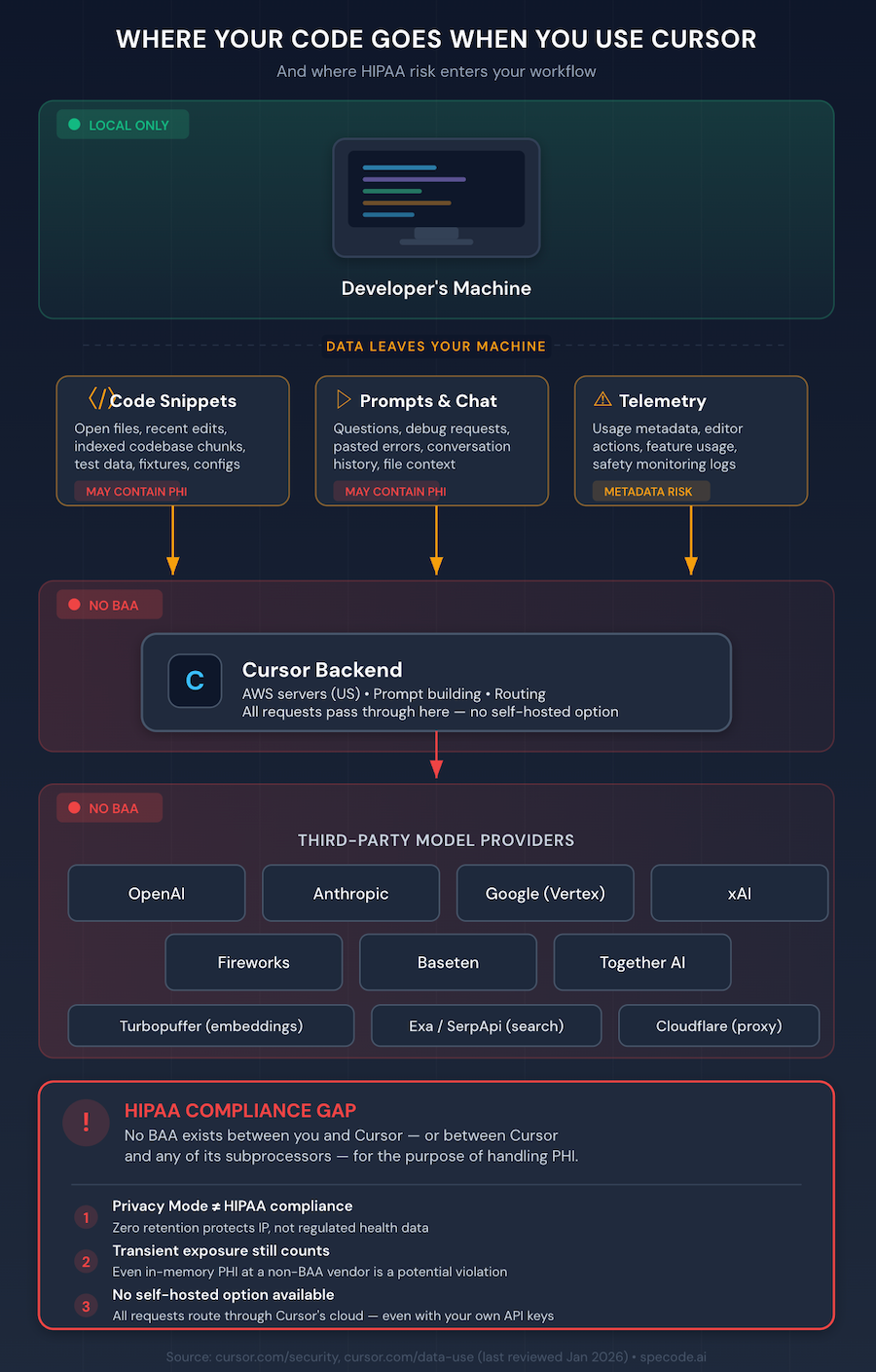

Here's how Cursor AI HIPAA concerns come into play at a technical level. When you use Cursor, several things happen behind the scenes:

- Codebase indexing. Cursor computes embeddings for your codebase by sending code chunks to its servers. The embeddings and obfuscated file path metadata are stored in Turbopuffer (hosted on Google Cloud in the US). Cursor states that for Privacy Mode users, no plaintext code is permanently stored — but chunks are transmitted server-side for embedding computation.

- AI requests. Every interaction with Cursor's AI features — chat, tab completions, background context-building, even bug detection — sends code data to Cursor's AWS backend, which routes it to model providers like OpenAI, Anthropic, Google (Vertex), Fireworks, Baseten, Together, or xAI.

- Prompt content. Your recently viewed files, conversation history, and relevant code snippets are included in every AI request as context.

The important takeaway: even with Privacy Mode enabled, your code is still transmitted in real time to Cursor's infrastructure and its subprocessors. There's no option for using self-hosted models and no way to direct-route to your own enterprise model deployment — Cursor's prompt-building happens on their servers.

How HIPAA Applies to Developer Tools

This is the part many developers miss: HIPAA's requirements don't stop at the production app. They apply to every tool that creates, receives, maintains, or transmits PHI — including tools used during development.

Cursor HIPAA compliance becomes a relevant concern the moment there's a plausible path for PHI into the editor. And in practice, that path is shorter than most teams realize. Consider how PHI can end up in a development workflow:

- Test data and fixtures with real or insufficiently de-identified patient records.

- Code comments referencing specific patients, conditions, or identifiers.

- Database schema and migration files that reveal the structure of PHI storage.

- Environment variables or config files containing connection strings to PHI-bearing databases.

- Debugging sessions where a developer pastes an actual API response to troubleshoot a bug.

Under HIPAA, any tool that processes this data — even transiently — becomes a business associate. That means the tool vendor must sign a business associate agreement (BAA), implement technical safeguards, and maintain audit logs. Without that chain of agreements in place, even brief, in-memory exposure to PHI can constitute a HIPAA violation.

A security risk analysis needs to account for every tool that touches data flows containing PHI — and AI coding assistants are no exception. The same logic applies to any platform in your stack. If a tool can't produce a BAA, it doesn't belong in a PHI workflow.

Read also: Is Base44 HIPAA compliant?

Is Cursor HIPAA Compliant? The Direct Answer

No. As of early 2026, Cursor is not HIPAA compliant. The core issue is straightforward: Cursor does not offer a BAA — the Cursor HIPAA BAA question has a definitive answer, and it's negative.

Here's what Cursor's own documentation tells us:

Privacy Policy (last updated October 6, 2025)

Anysphere collects and processes code data through its service. With Privacy Mode off (the default on Free and Pro plans), Cursor may use codebase data, prompts, editor actions, and code snippets to improve its AI features and train its models. Prompts and telemetry may also be shared with model providers.

With Privacy Mode On

Cursor enables zero data retention at its model providers and commits to not training on your code. However, code data is still transmitted to Cursor's backend and routed through multiple subprocessors — AWS, Cloudflare, Azure, GCP, and whichever model provider handles the request. None of these subprocessors are covered under a BAA with Cursor for the purpose of handling PHI.

Enterprise/Business Plan

Privacy Mode is enabled by default and enforced. But even on the Business tier, Cursor's security page makes no mention of HIPAA compliance, BAA availability, or PHI handling. Their own security page includes this candid note: "If you're working in a highly sensitive environment, you should be careful when using Cursor (or any other AI tool)."

SOC 2

Cursor holds SOC 2 Type II certification. That's a meaningful security baseline, but SOC 2 is not a substitute for HIPAA compliance — it doesn't address BAAs, PHI handling, or the specific administrative, physical, and technical safeguards required by the HIPAA Security Rule.

The bottom line: without a BAA, using Cursor in any workflow where PHI is present — even briefly, even in-memory — creates a compliance gap that flows directly back to you as the covered entity or business associate. Whether you're building a telehealth app development project or a patient portal, the same rule applies.

The Hidden PHI Risks in Your Cursor Workflow

Even developers who are careful about PHI often underestimate how an AI-powered Cursor IDE healthcare workflow can create exposure. Here are the specific, non-obvious risks:

1. Codebase Indexing Sends Code to the Cloud

If PHI strings exist anywhere in your repository — test fixtures, seed data, code comments, configuration files — codebase indexing will send those chunks to Cursor's servers for embedding. The .cursorignore file lets you exclude specific paths, but it requires you to know exactly where PHI might appear, and Cursor themselves describe it as a "best effort" mechanism.

2. Prompt Content Can Contain PHI

When you ask Cursor's chat a question or accept a tab completion, your recently viewed files and relevant code snippets are included as context. If you've been reviewing a file that contains PHI, that data is part of the prompt.

3. AI Chat Referencing Patient Data in Code Comments

Developers frequently leave comments like // handles the case where patient John Doe has multiple active prescriptions or // edge case from ticket #4521 — patient MRN 12345. These comments are indexed, embedded, and sent as context in AI requests.

4. Telemetry and Logging

Even with Privacy Mode on, Cursor collects usage telemetry. While Cursor states this doesn't include code content in Privacy Mode, the boundary between "metadata" and "data" can blur when audit logs and safety monitoring are involved.

5. No BAA Means Any Exposure Is a Potential Violation

This is the crux. All the risks above might be manageable if there were a signed BAA establishing Cursor as a business associate with defined obligations. Without one, even a single instance of PHI transiting Cursor's infrastructure — regardless of retention — can trigger a data breach notification obligation.

What About Cursor's Privacy Mode and Enterprise Plan?

Cursor AI PHI handling is often misunderstood because Privacy Mode sounds like it solves the compliance problem. It doesn't — but it's worth understanding what it actually does.

Privacy Mode (available on all plans):

- Enables zero data retention at model providers (OpenAI, Anthropic, etc.).

- Your code is not used as training data by Cursor or third parties.

- Cached file contents are encrypted with client-generated keys and temporary.

What Privacy Mode Does NOT Do:

- It does not prevent code from being transmitted to Cursor's servers and subprocessors.

- It does not create a BAA between you and Cursor (or between Cursor and its subprocessors for PHI purposes).

- It does not provide the durable audit trails that HIPAA's Security Rule requires — in fact, the zero-retention design actively works against this requirement.

- It does not prevent transient, in-memory exposure of PHI at non-BAA-covered vendors.

Business/Enterprise Plan:

- Privacy Mode is enforced (on by default, cannot be turned off).

- SOC 2 Type II certified.

- No BAA publicly offered — though Cursor's security page notes that "healthcare organizations should discuss specific requirements with Cursor directly."

The Cursor privacy policy is clear about what it does and doesn't cover. Adjusting Cursor privacy settings — enabling Privacy Mode, configuring indexing preferences — improves prompt privacy for intellectual property purposes. But it was not built for regulated data. Cursor deserves credit for being transparent about this, but transparency doesn't change the compliance posture.

How Cursor Compares to Other AI Coding Tools on HIPAA

If you're evaluating AI code editor compliance across the landscape, here's where the major tools stand. This matters because Cursor vs Copilot healthcare comparisons come up frequently, and the answer isn't as simple as "just use Copilot instead."

A few nuances worth noting in the context of Cursor AI medical app development decisions:

GitHub Copilot HIPAA status is commonly misunderstood. Microsoft 365 Copilot for Enterprise is covered under Microsoft's BAA. GitHub Copilot — the coding assistant — is explicitly not intended to process PHI or operate under a HIPAA BAA, per Microsoft's own compliance documentation. They are different products with different compliance postures.

Amazon Q Developer is similarly explicit: AWS's own guidance states that Q Developer "is not designed to transmit, store, or process ePHI." Q Business is HIPAA-eligible, but Q Developer is not.

Tabnine takes a different approach — its self-hosted and air-gapped development and deployment options mean code never leaves your infrastructure, which lets your organization apply its own HIPAA controls. BAAs are available for enterprise customers by arrangement. For teams that prefer working with a healthcare app development company rather than managing compliance in-house, this self-hosted flexibility can be paired with external compliance support.

Windsurf has the strongest compliance posture among AI code editors, with SOC 2 Type II, FedRAMP High accreditation, and HIPAA compliance with BAAs available. It also offers self-hosted deployment and EU data residency for GDPR requirements.

Can You Use Cursor Safely for Healthcare Development?

If you're considering Cursor for healthcare development, here's a practical, honest assessment of what's possible and where the limits are.

Mitigations That Reduce (but don't eliminate) Risk

- Never include real PHI in your codebase. Use synthetic or fully de-identified test data. This is good practice regardless of tooling, but it's especially critical with any AI assistant that sends code to the cloud.

- Enable Privacy Mode. It won't make Cursor HIPAA compliant, but it minimizes data retention and prevents training on your code.

- Use .cursorignore aggressively. Exclude any directories that might contain sensitive data — test fixtures, seed data, environment files, data migration scripts.

- Audit your prompts. Be conscious of what you paste into Cursor's chat. Never paste real patient data, API responses containing PHI, or database query results.

- Get legal advice. If your organization is a covered entity or business associate, have your compliance team formally assess whether Cursor fits within your compliance posture. The answer is almost certainly "not without a BAA," but document the decision either way.

The Honest Residual Risk

Even with all these mitigations, you're operating without a BAA. If PHI inadvertently enters your workflow — through a developer's mistake, through an overlooked test file, through a code comment that references a real patient — you have no contractual coverage and no defined breach notification pathway with Cursor. For HIPAA compliant developer tools, that's a non-starter for most compliance officers.

If your team does vibe coding healthcare app PHI workflows — building fast with AI assistance — you need to be especially disciplined about data hygiene, because the speed advantage of vibe coding HIPAA compliance often conflicts with the care required to keep PHI out of prompts.

The Safer Alternative: Building with Specode

When your Cursor AI coding tool healthcare stack needs to be compliant from day one, the solution isn't to bolt compliance onto a general-purpose code editor. It's to start with a platform built for healthcare.

Specode is an AI-powered healthcare app builder — not a code editor, but a purpose-built platform where compliance infrastructure, BAAs, and security safeguards are part of the foundation rather than afterthoughts.

Here's what that looks like in practice:

- HIPAA-compliant foundation: End-to-end data encryption, secure authentication, and protected data storage (Convex, which holds SOC 2 Type II attestation) are built into every layer.

- BAA included: On the Pro plan, the backend hosting BAA is included with your production deployment. No separate hosting account to set up, no BAA negotiation process to manage.

- Compliance review before go-live: Specode's team reviews your app before production deployment to ensure HIPAA compliance. You're not left to figure out access controls, audit logging, and data retention policies on your own — the AI helps you implement them.

- Full code ownership: Unlike no-code platforms, Specode generates real code (React, Tailwind, Convex) that you own 100%. Export it, modify it, deploy it elsewhere — no vendor lock-in.

- Speed without shortcuts. Teams report launching basic telehealth projects in 1–2 weeks and full platforms in 4–8 weeks — compared to the 6–12 month timelines typical of custom healthcare software development.

For teams comparing Specode vs Cursor healthcare — the tools serve different roles. Cursor is a powerful code editor that accelerates individual developer productivity. Specode is a healthcare AI builder that accelerates the entire build-to-compliance pipeline.

The question isn't whether Cursor is a good tool — it is. The question is whether it's the right tool when PHI is in scope. For most healthcare teams, the answer is no.

So, Should You Use Cursor for Healthcare Apps?

Cursor is an exceptional AI coding assistant. It's fast, powerful, and genuinely makes developers more productive. But it is not HIPAA compliant, it does not offer a BAA, and it sends code to cloud infrastructure that isn't covered for PHI handling. No amount of Privacy Mode configuration changes that.

Here's the practical recommendation by audience:

- Hobbyist or pre-revenue founder building without PHI: Cursor is fine. Just don't use real patient data in your codebase.

- Covered entity or business associate building with PHI in scope: Cursor creates unacceptable compliance risk. Use a HIPAA compliant AI coding assistant, a self-hosted solution like Tabnine, or a purpose-built healthcare platform like Specode.

- Technical founder evaluating build options: Consider whether bolting compliance onto a general-purpose editor is the right approach, or whether starting with a healthcare-native platform gets you to market faster with less risk.

The compliance posture of AI developer tools is evolving quickly. HHS OCR proposed the first major HIPAA Security Rule update in 20 years in January 2025, with AI systems that process PHI subject to enhanced scrutiny. The tools you choose today will define your compliance posture tomorrow.

Frequently asked questions

No. Cursor does not advertise HIPAA compliance, and its security and privacy documentation make no claims about HIPAA readiness on any plan tier. The tool lacks the required BAA infrastructure for handling PHI.

No. As of early 2026, Cursor does not publicly offer a Business Associate Agreement on any plan — Free, Pro, Business, or Enterprise. Their security page suggests healthcare organizations discuss requirements directly, but no BAA is documented.

You can use Cursor to write code, but you must ensure PHI never enters the development workflow — not in test data, not in code comments, not in prompts, and not in indexed files. Without a BAA, any PHI exposure through Cursor is a potential HIPAA violation.

No. Privacy Mode enables zero data retention at model providers and prevents training on your code. But it does not prevent code transmission, does not establish a BAA, and does not provide the audit trails HIPAA requires. It protects intellectual property, not regulated health data.

GitHub Copilot — the coding assistant — is not intended to process PHI or operate under a HIPAA BAA, per Microsoft's own documentation. Microsoft 365 Copilot for Enterprise is a different product that is covered under Microsoft's BAA when properly configured.

Tools with self-hosted or air-gapped deployment options (Tabnine, Windsurf) give you the most control. Windsurf offers a BAA for enterprise deployments and holds FedRAMP High accreditation. For a purpose-built healthcare platform with BAA included, Specode is designed specifically for this use case.

Without a BAA, transmitting PHI to Cursor's servers — even transiently — constitutes an unauthorized disclosure under HIPAA. This can trigger breach notification obligations, OCR investigation, and civil penalties ranging from $141 to over $2 million per violation depending on the level of negligence.